We, developers come across the scenario of storing or caching the data for some time and later use the same data to performs some other action. To perform this process we follow various types of caching mechanisms. Commonly used techniques are In-memory caching, distributed caching etc… In this topic we are going to talk about the caching system REDIS, which is is an open source, In-memory, key/value store, that is used primarily as an application cache or quick-response database.

How to configure Redis in your application?

To begin with, install the necessary cache packages:

npm i cache-manager

npm i cache-manager-redis-storeNext, configure the redis port and host in your .yml file:

redis:

host: localhost

port: 6379 Once the necessary packages and configuration setup completed, import the CacheModule into your app.module.ts file:

import { Module } from '@nestjs/common';

import { CacheModule } from '@nestjs/cache-manager';

import * as redisStore from 'cache-manager-redis-store';

import config from 'config';

const rConf = config.get('redis');

@Module({

imports: [

CacheModule.register({

isGlobal: true,

ttl: null,

store: redisStore,

host: rConf.host, //localhost

port: rConf.port //6379

})

],

controllers: [],

providers: [],

})

export class AppModule {}Storing the data to Redis store

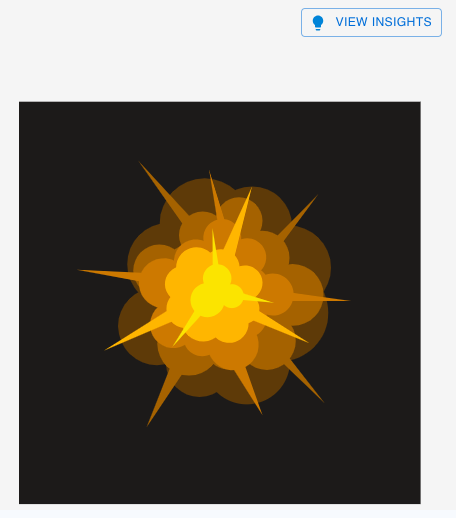

To understand this, let us see a working scenario where I need to implement the logic of storing some data into the redis store. Suppose I have multiple images and I need to get insights about each image once I click on each images insight button.

Each time when I hit the insight button, it will send a request to openai and get the response for each insight button. Then I thought of, not send request to openai if I hit the insight button for the second time for the same image. Instead, I will store the image insights which came from the openai response in the first time, into the redis store and I directly take it from there. This will help me to save the openai tokens allotted and the performance.

Now lets see how we can implement this. First we need generate a unique key for each image and send along with the image url to the backend.

axios.post('/your-endpoint-url', {

imageData, //image Data

insightKey, //unique key for each image

})

.then((res) => {

// handle response here

})

.catch((error) => {

console.error(error);

});In the backend service.ts file, before calling the openai api, check for the unique insight key present in the redis store. If the key is present, return back the value of the key stored in the store. Else, get the response from the openai and once get the response, store it in the redis store as key value pair for the upcoming use.

import { Inject, Injectable } from '@nestjs/common';

import { CACHE_MANAGER } from '@nestjs/cache-manager';

import { Cache } from 'cache-manager';

@Injectable()

export class ImageInsightsService {

constructor(

@Inject(CACHE_MANAGER) private readonly cacheManager: Cache,

//other inject repositories if needed

) {}

async getOpenaiResponseForImage(postData: any) {

try {

const key = postData?.insightKey

let cachedItem = await this.cacheManager.get(key);

//Getting the insights from redis store if present

if ( cachedItem ) {

return {

success: true,

data: cachedItem

}

}

//Handing the image data and get the openai response goes here

//Once we receive the response from openai:

const responseData = response.choices[0].message;

const parsedContent = JSON.parse(responseData);

const insightsArray = Object.keys(parsedContent).map(insightKey => ({

name: insightKey,

description: parsedContent[insightKey]

}));

//Once we format the response as we needed, store the insights to Redis store for 10 min.

await this.cacheManager.set(key, insightsArray, { ttl: 600 }); //Here we are storing the data in store for 10min {ttl: 600}

return {

success: true,

data: insightsArray

}

}catch (err) {

console.error(err);

}

}

}

In the above code, we are storing data in the store for 10 minutes. So when this api hits with in the next 10min, it will return the response from store rather than calling the openai api. Once the 10 min is passed the stored key and value will be removed and it will store new response from openai.

You can see the data stored in Redis store by the following steps using command prompt:

- First we need to open the Redis CLI by the following command:

redis-cli- To see all the keys stored in cache:

keys *

//The result will be like below

127.0.0.1:6379> keys *

1) "key-1"

2) "key-2"- From the above list, if you need to see the data of a specific key, run the following command:

get key-1

//It will show the stored value for that key

127.0.0.1:6379> get key-1

"[{\"name\":\"insights\",\"description\":\"The image depicts a stylized explosion with a central bright area of yellow and orange

circular shapes, radiating outward. The explosion effect includes sharp, angular elements extending in various directions, adding

dynamism and emphasizing the action. The background is a solid dark color, which enhances the vivid colors of the explosion,

drawing the viewer\xe2\x80\x99s attention to the central event.\"}]"Conclusion

Caching with Redis is a powerful way to increase the performance and efficiency of applications by reducing redundant computations or external API calls. In the above example, we showed you how to leverage Redis in a Nest.js application to cache openai insights for images, ensuring faster response times and optimal resource usage. Like this way you can use the redis caching method for various use cases as per your need.

By storing response temporarily in the redis store, we reduce the unwanted api called to openai and saving the tokens. This approach not only enhance the application speed but also makes it more cost-effective.

Software engineer working as a full stack developer.